The Hidden Environmental Impacts of Artificial Intelligence

TL;DR

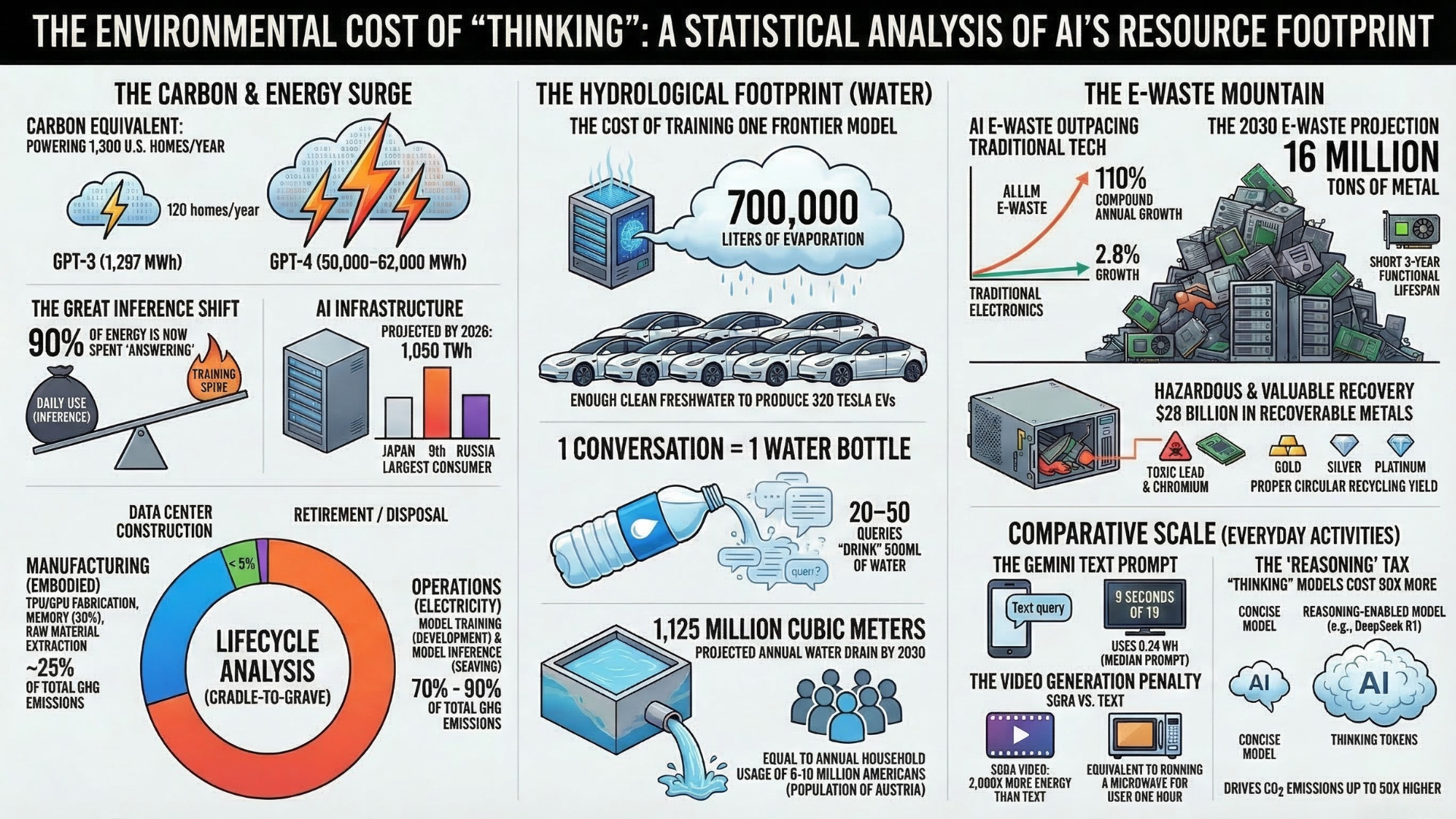

Artificial intelligence (AI) appears intangible, but behind every chatbot and image generator lies a large, ever‑growing physical footprint. Training and running modern models requires enormous amounts of electricity, water, and specialised hardware. Studies project that by 2025, AI could emit 32.6–79.7 million metric tons of CO₂ and consume 312.5–764.6 billion litres of freshwater. By 2030, the U.S. alone could see 24–44 million metric tons of CO₂ and 731–1,125 million cubic metres of water consumed annually for AI. While developers tout per‑query efficiency improvements, the surging demand and frequent hardware upgrades are driving emissions, water use and e‑waste ever higher. Without transparent, full‑lifecycle reporting, the true environmental cost of AI will remain obscured.

Why we need to look beyond the code

To understand the true environmental cost of the generative AI boom, we must examine the full lifecycle of these systems: from the energy and water consumed during model training and daily inference, to the embodied carbon of manufacturing specialized hardware.

Operational vs. embodied impacts: Training and inference (the operational phase) dominate climate‑change impacts, yet manufacturing the specialised chips and servers produces most of the human toxicity and mineral‑depletion burden.

Hidden R&D costs: Only the final, successful training run is usually reported. Analysis shows that R&D and experimentation consume ~65% of compute energy, meaning published numbers may understate true emissions by up to 50%.

Jevons paradox:Efficiency gains lead to lower costs, which in turn encourage more usage. Instead of reducing total impacts, we may simply run more queries and expand workloads.

Below we break down the footprint across three major phases, (powering AI, cooling AI, and manufacturing AI) and explore the transparency problem.

Powering AI: Electricity demand continues to rise

The Energy Surge

Training costs: Researchers estimate that training GPT‑3 consumed around 1,287 MWh of electricity. GPT‑4’s training likely required tens of thousands of megawatt‑hours, although precise figures remain undisclosed. Importantly, these numbers exclude the extensive experimentation that precedes final training.

Inference dominance: As models are deployed to millions of users, the energy cost shifts from one‑off training to continuous inference. A single standard text query consumes about 0.24–0.43 Wh; “reasoning” prompts can use 3.9–33+ Wh — over 70 times more energy. When multiplied by billions of queries, inference can account for up to 90% of a model’s lifecycle energy use.

Projected demand: Studies forecast that AI‑optimised servers will consume 85–134 TWh per year by 2027 and 23 GW of power by the end of 2025, roughly 20% of all data‑centre electricity. The International Energy Agency (IEA) predicts overall data‑centre electricity could double to ~945 TWh by 2030, almost matching Japan’s total power demand.

Carbon emissions

Digiconomist estimates that AI will emit 32.6–79.7 million metric tons of CO₂ in 2025 — similar to the annual emissions of New York City or Singapore. Cornell University researchers warn that if current growth continues, the U.S. alone could emit 24–44 million tons of CO₂ per year by 2030.

Who pays for the electricity?

Cloud providers often purchase renewable energy certificates (RECs) and use market‑based accounting to claim low emissions. However, their actual location‑based emissions have surged: Microsoft’s electricity use tripled from 2020 to 2024 (10.8 to 29.8 million MWh), while Google’s more than doubled from 15.2 to 32.2 million MWh; both companies’ location‑based CO₂ emissions rose significantly. Without mandatory disclosure of location‑based emissions, the public sees optimistic sustainability reports while the energy reality worsens.

Cooling AI: the hidden water crisis

Direct and indirect water use

AI servers run hot and must be cooled. Data centres typically use evaporative cooling towers, which consume freshwater directly, and they draw additional water indirectly through the electricity they consume (power plants require water for generation). Training GPT‑3 required around 700 000 litres of freshwater. Analysts estimate that by 2025 the global AI footprint could consume 312.5–764.6 billion litres of water annually, equivalent to the bottled water market. By 2030, U.S. AI systems alone could withdraw 731–1,125 million cubic metres of water per year.

Per‑query water cost

Researchers from UC Riverside and UT Arlington calculated that a 20–50‑question conversation with ChatGPT consumes roughly 500 ml of water (the volume of a small bottle) due to evaporative cooling and upstream power plant consumption. Another study estimates each LLM query “drinks” about 10 ml of freshwater. These small per‑query numbers add up quickly when millions of people interact with AI every day.

Localised impacts

Data centres are often clustered in regions with limited water resources. In some drought‑prone areas, a single campus can account for >25% of a city’s water use, pitting tech companies against farmers and households during shortages. Yet corporate sustainability reports rarely disclose water withdrawals or the Scope 3 water impacts from upstream power generation.

Manufacturing AI: hardware and e‑waste

Embodied carbon and materials

Focusing solely on operational electricity obscures a significant portion of AI's environmental impact. The physical manufacturing of AI hardware (embodied carbon) often accounts for over 50% of a system's total lifetime emissions, especially when data centers are powered by renewable energy. Comprehensive Life Cycle Assessments (LCAs) reveal that while GPUs drive operational power consumption, host processing systems (CPUs, memory, storage, and motherboards) dominate embodied carbon due to their complex, resource-intensive manufacturing. Furthermore, manufacturing dominates non-carbon environmental impacts, accounting for up to 99% of human toxicity and 85% of mineral and metal depletion associated with AI hardware.

Short lifespans and e‑waste

The AI arms race encourages frequent hardware upgrades: chips and servers are replaced every 1–3 years to chase performance gains. Researchers estimate generative AI could produce 1.2–5 million metric tons of electronic waste between 2020 and 2030, potentially rising to 16 million tons under aggressive growth scenarios. Circular economy strategies — refurbishing, recycling, and extending hardware lifetimes — could reduce e‑waste by up to 86%.

Material scarcity

Semiconductor production depends on copper and critical minerals. Droughts worsening threaten global copper supplies, jeopardising semiconductor production. Without a coordinated plan for responsible sourcing and recycling, material shortages could compound environmental impacts.

Transparency, data gaps and the rebound effect

The Accounting illusion

Many hyperscalers (major cloud providers) report decreasing carbon footprints using "market-based" accounting, which relies on purchasing renewable energy certificates (RECs) to offset emissions on paper. However, their actual "location-based" emissions (the physical carbon released by the local power plants feeding their data centers) have often more than doubled since 2020 due to AI infrastructure growth.

Development and experimentation costs

Most figures only report the energy used during the final, successful training run. However, the initial model development, hyperparameter tuning, and failed experimental runs add an estimated 50%"development tax" to the emissions total, a figure that is rarely disclosed to the public.

Rebound effects and Jevons Paradox

While developers are making individual AI queries more efficient, this has triggered the Jevons Paradox—where increased efficiency lowers costs, driving a massive surge in total demand and deployment. As a result, the aggregate environmental burden of AI continues to rise exponentially, nullifying the per-query efficiency gains.

Frequently Asked Questions (FAQs)

How does an AI query compare to a Google search?

A standard AI text query uses 0.24–0.43 Wh — roughly ten times the energy of a simple Google search. Advanced “reasoning” prompts consume 3.9–33+ Wh, making them 70–140 times more intensive.

Is water consumption really that high?

Yes. While each AI query “drinks” about 10 ml and a longer conversation uses ~500 ml, the aggregated water use across millions of users reaches hundreds of billions of liters per year. Freshwater is increasingly scarce, so these withdrawals strain local ecosystems.

Are companies required to report AI energy and water use?

Not yet. Companies often report carbon emissions using renewable energy certificates and ignore location‑based emissions, water withdrawals and R&D energy. Without mandatory, standardised reporting, it is difficult for regulators and consumers to understand the true environmental impacts.

Can hardware recycling solve the e‑waste problem?

Circular economy strategies like refurbishment and recycling could cut AI‑related e‑waste by up to 86%. However, the current market incentives favour rapid upgrade cycles. Without policy interventions, e‑waste could reach 1.2–5 million tons by 2030.

Are renewable-powered data centres a solution?

Renewables reduce emissions per kWh but do not eliminate the problem. Manufacturing remains toxic and resource‑intensive, and renewable plants still require water and minerals. Moreover, using renewables for AI may displace clean energy that could otherwise decarbonize existing grids.

What can policy‑makers do?

Mandate location‑based emissions and water reporting; require disclosure of R&D energy use; incentivize hardware reuse and recycling; and design fair taxation of AI services to reflect their environmental externalities. Without transparency, consumers cannot make informed decisions.

Final thoughts

AI promises significant societal benefits, from scientific discovery to healthcare improvements. Yet its hidden footprint is growing rapidly. The solution is not to halt innovation but to demand transparency, accountability and responsible design. By looking beyond per‑query efficiency and considering the full lifecycle — from R&D experiments to hardware manufacturing, electricity, water and e‑waste — we can better understand AI’s true cost and chart a path toward sustainable digital systems.